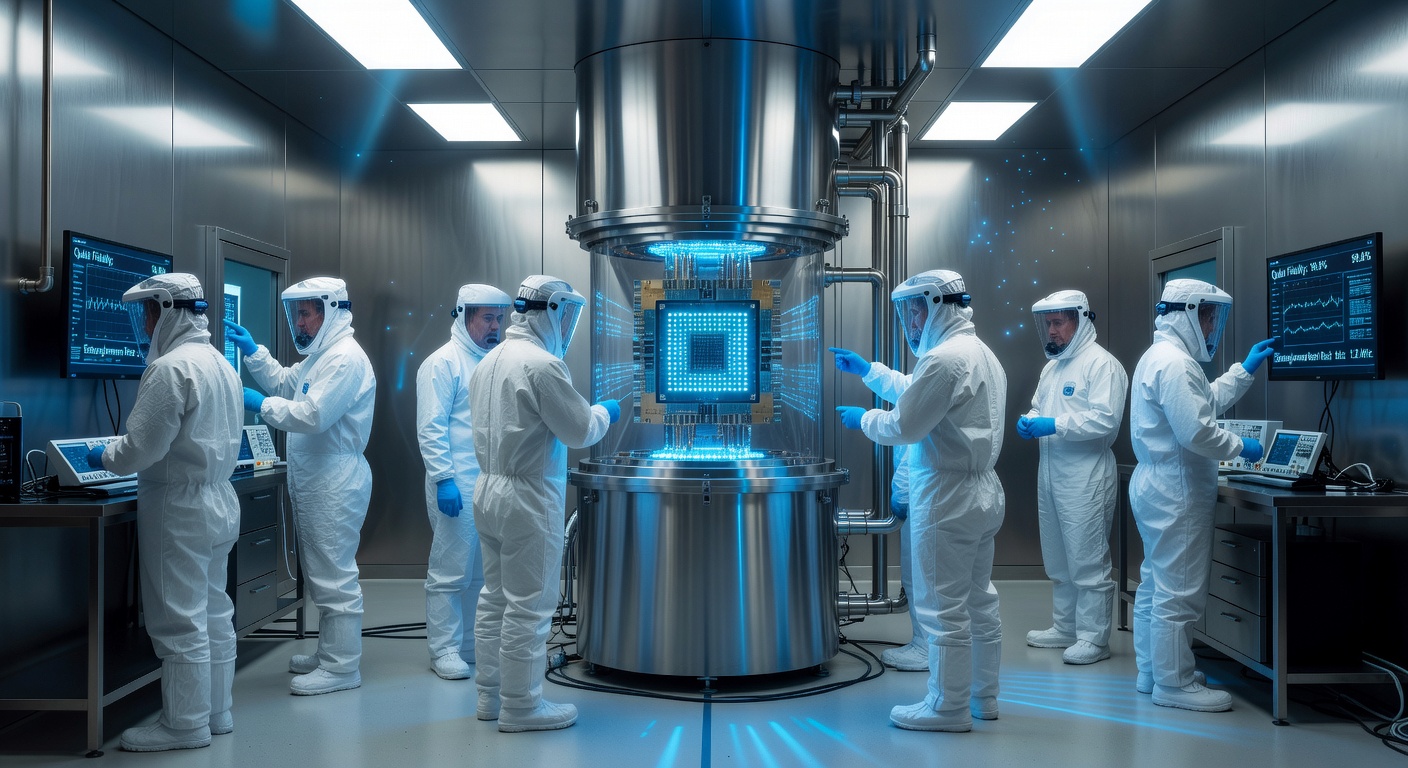

For thirty years, quantum computing has been arriving. Every few years, a new milestone — a new qubit record, a new demonstration of quantum supremacy for a specific task — would generate breathless coverage and then quiet. The machines were real but fragile, the error rates too high for practical use, the operating conditions requiring temperatures colder than deep space. Then, in 2024 and 2025, something shifted. Google's Willow chip achieved a 105-qubit computation in under five minutes that would take the world's fastest supercomputer ten septillion years. Microsoft announced a topological qubit architecture that reduces error rates by orders of magnitude. IBM's quantum roadmap put 100,000-qubit systems within five years. Quantum computing is not arriving anymore. It is here — and the world is only beginning to understand what that means.

READ MORE →The transition from theoretical promise to practical computation has been less dramatic than futurists predicted and more consequential than skeptics allowed. The question has never really been whether quantum computers would work — the physics is sound. The question has always been when the error rates would fall low enough, and the qubit counts climb high enough, for the machines to solve problems that classical computers cannot. That inflection point is now, by most accounts, within reach rather than decades away.

Classical computers operate on bits: switches that are either 0 or 1. Every operation — from streaming video to simulating a protein fold — is ultimately a very long sequence of switch operations. Quantum computers operate on qubits, which exploit quantum superposition to exist in a combination of 0 and 1 simultaneously until measured. This is not magic — it is a carefully engineered exploitation of quantum mechanical properties that has been demonstrated to be physically real.

The power derives from what happens when you entangle multiple qubits. A classical system with 300 bits can hold any single one of 2^300 possible states. A quantum system with 300 qubits can, in principle, hold all 2^300 states simultaneously and perform operations on all of them at once. 2^300 is a number vastly larger than the number of atoms in the observable universe. This exponential scaling is what gives quantum computers their potential advantage — and why certain problem types that are computationally intractable for classical computers become tractable for quantum ones.

In December 2024, Google published results from its Willow quantum chip in Nature that the research community broadly accepted as a genuine milestone. The chip performed a random circuit sampling benchmark computation in under five minutes. The same computation would take the fastest current classical supercomputer 10^25 years — longer than the age of the universe by many orders of magnitude. This was not a practical computation. Random circuit sampling is essentially the quantum equivalent of spinning roulette wheels — useful for benchmarking, not for solving real-world problems. But it demonstrated conclusively that quantum systems can now perform certain computations beyond any conceivable classical capability.

More significantly, Willow achieved something that had eluded quantum computing for a decade: below-threshold error correction. Quantum bits are inherently noisy — environmental disturbances cause errors. Previous quantum computers had error correction that consumed more qubits than it corrected for. Willow's architecture demonstrated that adding more qubits actually reduces the error rate — the system improves as it scales. This is the theoretical prerequisite for building large-scale fault-tolerant quantum computers, and it had never been demonstrated at this scale before.

"The Willow results are significant not because random circuit sampling is useful, but because below-threshold error correction changes the fundamental trajectory. We are now on a path where the problems get more tractable as the machines get bigger, rather than the error rates eating the advantage." — Prof. John Martinis, University of California Santa Barbara

Microsoft took a fundamentally different approach to quantum computing, and in early 2025 published results suggesting its long bet has paid off. While most quantum computers use superconducting qubits or trapped ions — both of which require extremely careful isolation to avoid decoherence — Microsoft's approach uses topological qubits based on exotic quasiparticles called non-Abelian anyons. Topological qubits are inherently more stable because their quantum information is stored in the global topology of the system rather than in a local physical property that can be disturbed.

The result, demonstrated in a paper in Nature Physics, was a qubit with error rates roughly 1,000 times lower than state-of-the-art superconducting qubits. If this scales — and independent researchers are cautiously optimistic — it means Microsoft's architecture could reach fault-tolerant quantum computation with far fewer physical qubits than other approaches, dramatically accelerating the timeline to practical quantum advantage.

The applications most frequently cited are accurate but often misunderstood in their timeline and character. Drug discovery and molecular simulation represent the strongest near-term case: quantum computers can simulate quantum systems (molecules are quantum systems) with an efficiency that classical computers fundamentally cannot match. A quantum computer simulating a complex protein-drug interaction can explore the quantum mechanical landscape of binding energies in ways that classical force-field approximations cannot. This does not replace drug development — it accelerates and enriches the computational step of it.

Materials science is the adjacent domain: designing room-temperature superconductors, better battery electrolytes, more efficient solar cell materials — all involve finding molecular configurations whose properties emerge from quantum effects. Classical simulation approximates; quantum simulation solves.

Cryptography is the application that generates the most alarm. Current public-key cryptography (RSA, elliptic curve) depends on the computational difficulty of factoring large numbers — a problem that Shor's algorithm, running on a sufficiently powerful quantum computer, can solve efficiently. Cryptographers estimate that a quantum computer with approximately 4,000 stable logical qubits could break RSA-2048 encryption. We are not there yet — fault-tolerant logical qubits remain years away — but the timeline has compressed dramatically, and the world's national security establishments have noticed. Post-quantum cryptography standards were finalised by NIST in 2024. The migration of critical infrastructure to quantum-resistant encryption is already underway.

Current superconducting quantum computers operate at temperatures around 15 millikelvin — colder than the average temperature of deep space (2.7 Kelvin). This requires enormous dilution refrigerators, significant power consumption, and infrastructure that makes quantum computers currently unsuitable for anything but fixed research installations or data centers. This is not a theoretical barrier — it is an engineering one, and engineering problems have a history of yielding to sustained effort. Trapped ion computers, which operate at room temperature, offer a path that avoids the cooling problem at the cost of slower gate speeds. Photonic quantum computers, where qubits are encoded in light, are room-temperature by nature. The competition between modalities is unresolved, which is a sign of a healthy, pre-dominant-design industry rather than a sign of fundamental limitation.

The broader ecosystem around quantum hardware is maturing rapidly. Cloud access to quantum processors through IBM Quantum, Amazon Braket, Microsoft Azure Quantum, and Google Quantum AI has given researchers worldwide hands-on experience with real quantum hardware. Programming frameworks — Qiskit, Cirq, PennyLane — have lowered the barrier to writing quantum algorithms. Quantum error correction codes are now a mature academic discipline. The talent pipeline is building: quantum computing and quantum information science courses are now offered at hundreds of universities. The infrastructure for a quantum computing industry — not just a quantum computing research programme — is taking shape. What comes next will not be an announcement. It will be a gradual accumulation of applications, each one small in isolation, collectively transformative.

At 6:32 AM, your cortisol peaks — the biological alarm clock that rouses you more reliably than any phone. By 10:00 AM, your alertness and short-term memory are at their daily maximum. By 2:30 PM, your reaction time is fastest. At 5:00 PM, your cardiovascular efficiency peaks — the ideal time for athletic performance. By 9:00 PM, melatonin floods the bloodstream and the body begins its repair cycle. This isn't astrology. It's chronobiology, and the science of human circadian rhythms has advanced so rapidly in the past decade that we now know — with molecular precision — why timing is not just relevant to health. It is health.

READ MORE →The 2017 Nobel Prize in Physiology or Medicine went to Jeffrey Hall, Michael Rosbash, and Michael Young for their work uncovering the molecular mechanisms of circadian rhythms. The prize recognised three decades of research demonstrating that virtually every cell in the human body contains a molecular clock — a feedback loop of interacting proteins that cycles with a period of approximately 24 hours, entrained by light, temperature, and feeding signals from the environment. The implications of this discovery for medicine, performance, and daily life are still being worked out.

The body's master clock sits in the suprachiasmatic nucleus (SCN) — a tiny region of the hypothalamus containing about 20,000 neurons. The SCN receives direct light input from the retina via a dedicated neural pathway, and uses this information to synchronise the molecular clocks in every other tissue and organ. The liver clock controls the timing of glucose metabolism. The muscle clock controls protein synthesis and repair. The immune system clock controls the timing of inflammatory responses. Even individual cells in a Petri dish, isolated from all external signals, continue to cycle with approximately 24-hour rhythms — the clock is genuinely cellular, not just organismal.

This architecture has profound implications. The liver processes drugs differently at different times of day. The immune system mounts inflammatory responses more aggressively at certain hours. Blood pressure follows a daily curve with characteristic peaks and troughs. Cancer cells divide on circadian schedules. None of this was taken seriously in medicine for most of the 20th century. It is now one of the fastest-growing areas of biomedical research.

A 2021 meta-analysis in The Lancet Oncology reviewed 71 clinical trials of chemotherapy and found that survival outcomes varied significantly depending on the time of day treatment was administered. For colorectal cancer treated with oxaliplatin, 5-fluorouracil, and leucovorin, patients receiving the protocol in the morning experienced 41% more severe side effects than those treated in the afternoon — with no difference in efficacy. The cancer cells were equally vulnerable. The healthy cells that generate side effects were not.

Cardiovascular drugs show comparable patterns. Aspirin taken at bedtime reduces blood pressure more effectively than morning dosing, consistent with the circadian pattern of morning blood pressure surges that drive the well-documented morning peak in heart attacks and strokes. Statins taken at night are metabolised more efficiently than morning doses because the liver's cholesterol synthesis pathway peaks overnight. Antihistamines taken at night are more effective for overnight allergic responses because the immune system's histamine response peaks in the small hours. The correct time to take a drug is not merely convenient — for some medications, it is clinically significant.

"We are practising medicine as if our patients were biological constants — as if a cell at 3 PM were the same as a cell at 3 AM. They are not. The implications of taking chronobiology seriously in clinical practice are substantial." — Prof. Satchidananda Panda, Salk Institute for Biological Studies

Time-restricted eating (TRE) — consuming all food within a defined window, typically 8-10 hours, aligned with daytime light exposure — has emerged as one of the most evidence-backed dietary interventions in recent research. Unlike caloric restriction, TRE does not require counting calories or tracking macronutrients. It simply anchors eating to the body's natural metabolic window and gives the digestive and metabolic systems a prolonged overnight fast.

A 2022 randomised controlled trial in the New England Journal of Medicine found that TRE in obese adults (8-hour eating window, 7 AM to 3 PM) produced significant improvements in blood glucose, blood pressure, and inflammatory markers — without any caloric restriction. Participants were explicitly instructed to eat whatever they wanted, as much as they wanted, just within the window. A 2023 study in Nature Metabolism found that TRE improved insulin sensitivity, reduced visceral fat, and lowered LDL cholesterol over 12 weeks in adults with metabolic syndrome. The mechanism appears to involve restoration of circadian alignment in metabolic tissues — allowing the liver, pancreas, and intestinal cells to complete their overnight repair and housekeeping cycles before being asked to process new food.

Individual variation in circadian timing — the difference between larks and owls — is not a character flaw or a lifestyle choice. It is genetic. The primary clock gene, CLOCK, and its partners PER1, PER2, CRY1, and CRY2, vary between individuals in ways that shift the phase of the entire circadian system by hours. True "evening chronotypes" (owls) have biological clock phases that run 2-4 hours later than morning types. Their cortisol peak, alertness peak, and melatonin onset all shift accordingly.

This has major implications for work scheduling. School start times have been a particular focus: multiple studies, including a 2019 systematic review in Sleep Medicine Reviews, have found that adolescent chronotype shifts substantially toward eveningness during puberty and doesn't return to morning preference until the mid-20s. Early school start times force adolescents to perform cognitively demanding work during what is, for their biology, the middle of the night. The American Academy of Pediatrics recommends school start times of no earlier than 8:30 AM for middle and high school students specifically on chronobiological grounds. Many districts have complied; outcomes data shows improvements in attendance, academic performance, and mental health.

Chronic circadian misalignment — living out of sync with one's biological clock — carries measurable health costs. A 2020 study in JAMA Internal Medicine followed 68,000 women over 22 years and found that nurses working rotating shifts had significantly elevated rates of cardiovascular disease, type 2 diabetes, and cancer. The association was dose-dependent: more years of shift work meant higher risk. The mechanism involves disruption of the rhythmic release of hormones, disruption of immune function timing, and sustained metabolic stress from eating at biologically inappropriate times.

The practical implication is that circadian health — maintaining consistent sleep and eating schedules aligned with daylight — is as important a health parameter as diet and exercise. Light exposure management (bright light in the morning, reduced blue light in the evening) is the most potent lever. Eating within a consistent daytime window is the second. Consistent sleep and wake times, even on weekends, preserve the entrainment of peripheral clocks that drive metabolic health. The body clock is not metaphorical. It is molecular, measurable, and manageable — and the evidence that managing it matters for health is now overwhelming.

In 2016, researchers at the Kyoto Institute of Technology made a discovery that seemed almost too convenient: a bacterium living in a bottle-sorting facility in Japan that had evolved to eat PET plastic — the material in most disposable water bottles. The bacterium, Ideonella sakaiensis, had acquired two novel enzymes that together could break down PET into its constituent chemicals. It wasn't fast. It wasn't efficient enough to matter industrially. But it proved something that changed the field: plastic is not an inert, impervious material. Given enough evolutionary pressure, biology finds a way. The decade since has been spent engineering that way to go faster.

READ MORE →The plastic problem has a number of distinct dimensions that are often conflated. There is plastic waste in oceans (8 million tonnes per year entering marine environments). There is microplastic contamination of food, water, and human tissue (microplastics have been found in human lungs, blood, breast milk, and placental tissue). There is the problem of plastic in landfills (most plastic is not recycled — globally, only 9% of all plastic ever produced has been recycled). And there is the emissions problem: plastic production accounts for approximately 4% of global greenhouse gas emissions, and is projected to account for 20% of oil consumption by 2050. Enzymatic plastic degradation addresses the waste and recycling dimensions — not the production problem — but the potential is nonetheless transformative.

The enzyme discovered in Ideonella sakaiensis, dubbed PETase, breaks PET plastic by cleaving the ester bonds that hold the polymer chains together, producing terephthalic acid (TPA) and ethylene glycol (EG) — the same molecules used to make PET in the first place. This is crucial: enzymatic degradation produces feedstocks for new plastic, not waste products. If industrial-scale enzymatic recycling were achievable, it would close the PET loop entirely — transforming plastic waste into virgin-quality feedstock indefinitely.

The problem was speed. Natural PETase degrades PET at a rate that would take years to process a bottle. The enzyme is functional but glacially slow by industrial standards. The response from synthetic biologists was to redesign it. In 2018, a team at the University of Portsmouth and NREL (the US National Renewable Energy Laboratory), while attempting to understand PETase's structure, accidentally created a mutant version twice as fast as the natural enzyme. The mutation was a structural change near the active site that improved the enzyme's fit to PET's polymer chains. A subsequent iteration, FAST-PETase, developed by the University of Texas Austin in 2022, degraded PET 5 times faster than the best previous engineered variants, working at room temperature in under 24 hours for depolymerising plastic waste.

"The natural enzyme was a proof of concept. The engineered variants are becoming a technology. The gap between those two things is being closed faster than anyone expected in 2016." — Prof. Hal Alper, University of Texas Austin

The French company Carbios has taken enzymatic PET recycling closest to industrial scale. Their process uses a thermophilic (heat-tolerant) PETase variant that operates at 72°C, the temperature at which PET softens — allowing the enzyme both better access to the polymer chains and faster kinetics. Their pilot plant in Saint-Fons, France, processed 2 tonnes of PET waste in 10 hours in 2021 trials, producing TPA and EG at 97% recovery rates. Commercial-grade PET bottles were then made from the recovered feedstock and independently certified as identical in quality to virgin PET.

L'Oréal, Nestlé Waters, and PepsiCo are among the industrial partners in Carbios's consortium. An industrial facility capable of processing 50,000 tonnes of PET waste per year is planned for 2026. For context, global PET production is approximately 70 million tonnes per year. One facility is not the solution — but it is a proof-of-scale that moves enzymatic recycling from laboratory demonstration to industrial category.

PET represents only about 8% of global plastic production. The much larger categories — polyethylene (PE, used in grocery bags, packaging film), polypropylene (PP, used in bottle caps, food containers), and polystyrene (PS, used in foam packaging) — are chemically different and more resistant to enzymatic attack. Their carbon-carbon backbone bonds are much stronger than PET's ester bonds, and no natural organism had evolved to break them — until recently.

In 2023, a team at Northwestern University discovered a bacterial enzyme capable of oxidising polyethylene, opening the first biotic attack surface on the world's most produced plastic. The enzyme, a lytic polysaccharide monooxygenase variant, was identified in wax moth larvae gut bacteria — the larvae can survive on polyethylene as a sole carbon source, which the researchers traced to this enzyme activity. The research is early-stage; the degradation rate is orders of magnitude too slow for industrial application. But the discovery of a naturally occurring PE-attacking enzyme confirms the same principle that PETase demonstrated: evolution can find these pathways, and synthetic biology can accelerate them.

The urgency of the plastic problem extends beyond environmental aesthetics. A 2022 study in Environment International found microplastics — particles under 5mm — in 17 of 22 human blood samples tested, at concentrations of up to 1.6 micrograms per millilitre. A 2023 study found microplastics in every human placenta sampled. A 2024 paper in NEJM Evidence found that patients with carotid artery plaque containing microplastics had a 4.5 times higher risk of heart attack, stroke, or death over three years compared to patients without microplastics in their plaque. The causal mechanism is not established — the correlation is. The plastic crisis is no longer only an environmental problem. It is a public health problem.

Enzymatic recycling is one component of a broader plastic pollution response that includes redesigning products for circularity, reducing unnecessary single-use production, improving waste collection infrastructure in the countries (primarily South and Southeast Asia) that account for most ocean plastic input, and developing biodegradable plastic alternatives. No single intervention closes the loop. But enzymatic recycling has the potential to transform the economic proposition of plastic collection: if waste plastic becomes a valuable feedstock for chemical recovery rather than a disposal problem, the market incentives for collection and processing align with environmental outcomes for the first time. The bacteria that evolved in a Japanese recycling facility may have pointed the way to an industrial system that finally treats plastic waste as a resource rather than a burden.

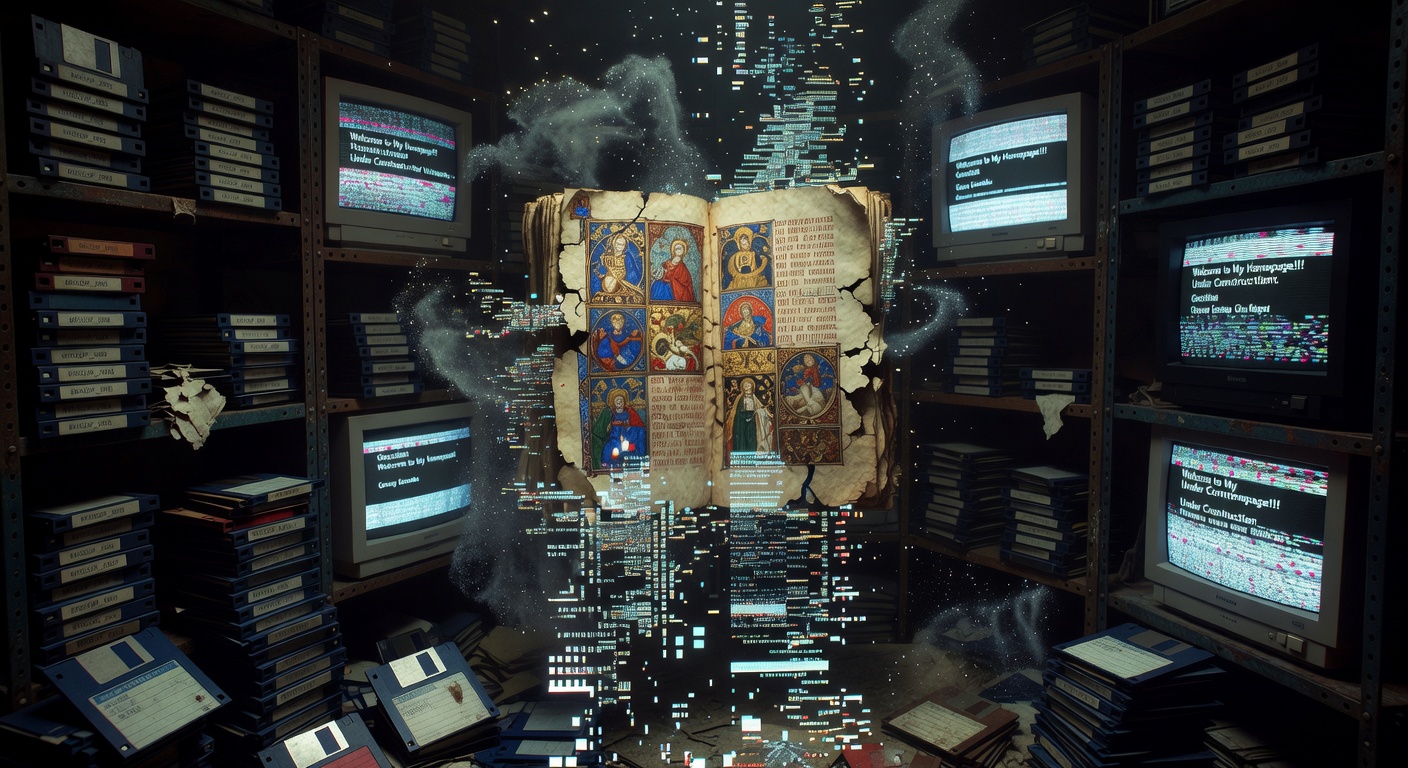

In October 2023, a study by the Pew Research Center found that 38% of web pages that existed in 2013 were no longer accessible a decade later. A quarter of all links in Wikipedia articles are broken. Half of all links cited in US Supreme Court opinions are dead. The Library of Congress receives millions of images, videos, and digital documents that it stores on servers and tape — but the formats those files were created in are often already obsolete, and the migration effort to keep them readable is ceaseless and underfunded. We live in the most information-rich period in human history, and we are losing vast quantities of that information constantly, invisibly, and largely without noticing. The ancient Egyptians built stone monuments that have lasted 4,000 years. We build websites that last four.

READ MORE →The term "digital dark age" was coined by Vint Cerf, one of the founding architects of the internet, in a 2015 lecture where he expressed concern that the accelerating pace of software and format change means that digital content created today may become unreadable within decades — not because the bits have been lost, but because the software to interpret them no longer runs on modern hardware. A photograph from the 1850s can still be held up to the light and seen. A file created in an early version of Microsoft Word in 1992 may require significant technical archaeology to open. A website created in Flash — which Adobe discontinued in 2020 after 25 years — is now, for most purposes, gone forever.

Link rot — the disappearance of URLs that once pointed to accessible content — is the most visible manifestation of digital impermanence. The Pew 2023 study that found 38% of 2013 web pages missing was one of the most rigorous analyses of the problem to date. It examined a representative sample of pages across all major content categories and found disappearance rates that varied by type: social media posts (Twitter/X specifically) had disappearance rates exceeding 60% over a decade; government and institutional pages were more stable but still showed 20-25% disappearance rates over the same period.

The implications for scholarship and legal documentation are severe. Academic citations to web content — increasingly common as journalism, grey literature, and primary data move online — become unreliable within years. A 2022 study in the Journal of the American Medical Informatics Association found that 26% of URLs cited in biomedical journal articles published between 2015 and 2020 were already inaccessible. Legal cases cited web pages; the pages change or disappear; the record of the argument is severed from the evidence that supported it.

"The printed page is remarkably robust. A book published in 1800 is generally still readable. A website published in 2000 has roughly a 50% chance of being inaccessible today. We have traded permanence for immediacy without fully reckoning with the cost." — Brewster Kahle, founder of the Internet Archive

The Internet Archive, founded by Brewster Kahle in 1996, runs the Wayback Machine — the closest thing the internet has to a permanent record. As of 2025, the Wayback Machine has archived over 900 billion web pages, 44 million books and texts, 14 million audio recordings, 8 million videos, and 4 million software programs. It is the largest public digital archive in history, operated by a non-profit organisation with an annual budget of approximately $30 million — a fraction of what a single large tech company spends on marketing.

The Archive operates on a model of benign presumption: it crawls the web continuously, archiving pages without explicit permission, on the theory that preservation is in the public interest. This has put it in legal conflict with content owners. In 2023, a US federal court ruled against the Internet Archive in a case brought by four major publishers over its digital lending library program — a decision that has threatened the Archive's financial sustainability and raised fundamental questions about the legal status of digital preservation.

The case exposed a fundamental tension: copyright law, designed for a world of physical objects, treats digital preservation as reproduction. Archiving a web page — creating a copy to prevent the original from disappearing — is legally the same as piracy under current doctrine. The Internet Archive argues that its activities serve an obvious public interest; the publishers argue that the Archive's lending program was not preservation but distribution. The distinction matters enormously for the future of digital memory.

Beyond link rot lies a problem that is harder to fix: format obsolescence. Digital content requires software to interpret it. Software runs on hardware. Hardware changes. The chain of dependencies from a digital file to readable content is long, and each link in the chain can break independently of the others.

The computer game history community has documented this problem in painful detail. An estimated 87% of classic games — from the Atari and early PC era — are out of print and unavailable through legal channels. The only way to access them is through emulation (running old software on modern hardware through software simulation) or through preservation organisations. The Video Game History Foundation, which advocates for game preservation, has demonstrated that Nintendo's game licensing system — where old games can be purchased digitally for a fee — generates marginal revenue while preventing the development of robust preservation mechanisms. When Nintendo discontinued the Wii Virtual Console in 2019, the licensed access to hundreds of classic games simply disappeared.

Digital preservation professionals have developed a framework for sustainable long-term preservation that has three components: redundancy (multiple copies in multiple geographically distributed locations), format migration (actively converting content from obsolete formats to current standards on a rolling basis), and emulation (maintaining the ability to run obsolete software environments). Each of these is technically straightforward and operationally demanding. The Library of Congress, the British Library, the Internet Archive, and national archives worldwide are doing this work — but they are doing it with resources that are dwarfed by the volume of content being created.

Global internet traffic in 2025 is estimated at 600 exabytes per month. The entire physical archive holdings of the Library of Congress — 17 million books, 5 million maps, 15 million photographs — amount to approximately 10 terabytes of text-equivalent data. The internet generates that volume of new data every few seconds. No archive can save everything. The question is what we choose to prioritise and who decides.

The absence of a funded, politically mandated digital preservation institution — equivalent to a national library but for the entire digital commons — is one of the most consequential policy failures of the information age. National libraries receive public funding to preserve the printed record. The digital record has no equivalent public institution. The Internet Archive fills the gap on a shoestring; private companies archive their own content for commercial reasons; universities and libraries do what they can with library budgets. But there is no systematic, nationally funded effort to archive the digital public sphere — the news sites, social media archives, government documents, community forums, and independent publishers that constitute the actual record of how people lived and thought in the early 21st century.

Future historians may be able to reconstruct 19th-century public opinion in extraordinary detail from newspaper archives. They may find the early 21st century — despite its vast digital output — surprisingly opaque, not because the content wasn't created but because no institution was tasked with keeping it. The medieval scribes who copied ancient manuscripts in freezing monasteries preserved classical antiquity for the Renaissance. The digital age has no equivalent institution, and the window to build one is closing faster than most people realise.